Table of Contents

AI is everywhere—it’s all anyone talks about! In the corporate world, between market pressure, sales expectations, and leadership’s desire not to miss the boat, the question is no longer if we should go, but how to go about it intelligently.

At Yogosha, Offensive Security Platform, we took a bold bet on an AI transformation in just 3 months. Once the initial impulse was given (see our article AI Transformation in 3 Months: How to Create Momentum with the QPQC Approach), and everyone was ready to move forward, a very concrete question landed on the desks of our Product and Tech teams: “Okay, but what exactly do we do, and with which tools?”

Moving from ambition to execution is where things get serious. It’s not enough to just want to “do AI”; you have to pick the right battles, get equipped without breaking the bank, and upskill without compromising data security (ISO 27001 obliges!).

In this article, we’re opening up the hood to share our operational methodology. We’ll talk about selecting use cases, our “in-house” infrastructure, our 3-day flash tests to choose the best tech (our famous “Spikes”), and how we kept the budget under control. Welcome to the technical backstage of our AI pivot.

I. How to choose use cases? AI Product Management

Doing AI is one thing, but how do you ensure you’re doing the right AI? In the AI era, integrating Artificial Intelligence into your product has become, let’s face it, a necessity to stay competitive. Expectation is high among prospects, sales teams, and of course, management. But before diving in, it is vital to define which AI services to implement and how.

The key question we asked ourselves (and encourage you to ask) remains: Which problem can AI solve significantly better and more simply than our traditional approaches?

Step 1: Adapting the selection and prioritization process

We started with a simple step: listing all the potential issues and use cases that interested us, whether they concerned our platform’s features or improving our internal productivity. To avoid scattering our efforts, we focused on prioritizing this list by honestly answering the following questions:

- Can an expert system suffice, or is AI the only way?

- Does a current LLM already achieve the desired result without extra effort?

- Which use case truly generates the most immediate value for our users?

Finally, we rounded off our reflection by analyzing competitor offerings and, most importantly, deep-diving into our users’ core pain points.

Step 2: Choosing the two most promising use cases

Our decision took shape after following the evolution of tools like Xbow (an AI pentester) and having crucial exchanges with a client starting AI-assisted triage internally. These conversations guided us toward two major use cases:

1. AI-Assisted Triage: the time saver

Triaging vulnerability reports is notoriously time-consuming. In the short term, we anticipate an explosion in the volume of security reports due to the rise of AI-powered offensive tools. Qualifying these flaws quickly won’t be a luxury; it will be an operational survival issue.

We identified a major opportunity: developing an AI capable of detecting out-of-scope vulnerabilities and duplicates, and suggesting triage actions. For our Security Program Managers, it’s a precious time saver. For our clients, it guarantees they can focus on what matters – remediation – without being hampered by a lack of internal resources or skills for sorting reports.

2. Vulnerability detection & reporting: our offensive DNA

Yogosha’s mission is, in essence, to detect vulnerabilities to secure our clients’ assets. It was natural to opt for a second use case oriented toward offensive security. Why? Because we anticipate that all researchers will be equipped with AI tools in the coming months. Our AI’s ability to detect vulnerabilities and generate a report represents significant added value and opens doors to other opportunities (like ingesting results from vulnerability scanners).

Our golden rule: identify the problem

To define your use cases, here’s our main piece of advice: always focus on the fundamental business problems you want to solve. Take the time to explore whether AI is the right solution to improve a feature, and above all, consider how to implement it securely, ethically, and responsibly, by clearly defining the necessary data.

💡 If you can’t clearly formulate the problem to be solved and the expected result of the AI feature, it’s better to stop: it’s not a relevant use case. Developing an AI feature just out of obligation or to follow a trend risks costing you dearly in time and investment.

II. How to choose tools and technologies to operate with AI?

So, we’re launching into AI, but where do we start, how, and with what tools and technology? That was definitely the first question we had to answer! To get a clear picture, our team (Product and Tech) moved forward together, step by step, using a simple four-step method:

- Scoping: Setting boundaries and defining needs.

- Infra: Building our secure little lab.

- Exploration (Spikes): Testing tools thoroughly but quickly.

- Final Choice: Selecting the best solution for us.

Step 1: Defining constraints and needs

Before opening the toolbox, we had to ask the right questions. This is a crucial step to avoid getting scattered or making mistakes!

🔒 Our two big constraints:

When discussing AI, two topics always come up:

- Security: No way sensitive data wanders off onto the public internet. We needed total control.

- Costs: Public AI is great, but it bills by the token (every word, every character counts!). Use it a lot, and the bill stings. We had to master this budget.

🤖 Our goal: “Intelligent Agents” Our main need was to create AI agents that could take over tedious and time-consuming tasks. They needed to:

- Work with our internal AI.

- Connect to our systems (via API or secure MCP connector).

- Allow us to test our prompts on different LLMs to choose the most efficient one, and also to version them.

- Provide us with tools but also allow us to create our own.

Step 2: Setting up a private AI sandbox

To meet security and cost constraints, our Infra team had a clear idea: we will build our own private AI environment.

- The lab: the infrastructure team launched a POC to install a private AI. Basically, we took a large server with a powerful graphics card (an 80GB H100) and loaded various open-source AI models onto it (thanks Ollama.com and Hugging Face!).

- The winning calculation: yes, the machine is expensive upfront. But the huge advantage is that once we have the server, we no longer consume tokens! No more paying per request. It’s an investment that gives us complete security and predictable usage costs.

- Counting without paying: the Infrastructure team even set up a tool to count all the tokens we would have consumed if we had used a public API. This allows us to compare the cost of our machine to the hefty bill we would have received otherwise!

The result? We had our own secure internal server. Our two major constraints were solved!

Step 3: Finding the best tech in just 3 days!

The environment is ready. Now we choose which technology we’ll use to build our agents. That’s where the Spike comes in.

💡 Quick Reminder: What’s a “Spike”?

If you’re familiar with Agile methodologies, you’re probably familiar with it. A Spike is a short, focused technical study.

- The objective: We want to explore a vague topic, but we don’t want to spend weeks on it! It’s time-boxed (we set a limited time) and structured (we list the study’s objectives and non-objectives).

- The deliverable: A clear answer that helps us make a decision and reduce risk before fully committing.

The principle? It’s better to test for three days and conclude “it doesn’t work” than to code for three weeks only to realize you’re on the wrong track.

In our specific case, this allowed us, within a limited time investment, to acquire the necessary knowledge to reduce risk, even though we didn’t really know where we were going or how to proceed.

🔬 Our Big 3-Day Test We selected 4 technologies that seemed promising (one per volunteer developer, no hard feelings!):

- LangChain / LangGraph

- N8N

- CrewAI

- Ilphant

The objective was simple: each technology had to pass the test of our Stage 1 requirements. At the end of the 3 days, we would know which one was the big winner for us!

Step 4: Relying on the collective for an informed choice

After these three intensive days of spikes, the team gathered for deliberation! It was the moment of truth where each developer presented their discoveries, frustrations but also successes, and conclusions on the technology they had tested.

🔎 The Dev comparison:

- CrewAI & LangChain / LangGraph: The Python heavyweights. They tick all the boxes (agents, MCP, versioning…). LangChain is the historical pioneer for linear chains, while LangGraph allows the creation of highly complex circular workflows. The catch: They are coded in Python. At Yogosha, Python isn’t our “native” language. The learning curve felt too steep to get something out quickly.

- LLPhant: The promising challenger Inspired by LangChain but written in PHP, it’s a code-oriented library, and the management of orchestration, errors, connectors, etc., is entirely up to us. More control, but at the cost of significant technical effort that will quickly increase development time. Furthermore, this library is still a bit young, even though it seems to be well-maintained.

- N8N: The visual outsider. It also meets our technical requirements (agents, API, MCP). Versioning is a bit different (integrated history or Git backup in the paid version), but it compensates with a phenomenal library of integrated tools (Google, Slack, Discord, etc.). The Biggest Advantage: It’s visual and therefore easily accessible to the product team as well! It’s incredibly quick to learn, and we can even add workflow assessments to ensure our agents aren’t doing anything wrong.

🎉 The Winner: N8N!

So, why did we ultimately choose N8N?

While pure-code frameworks are powerful, N8N will allow us to build complex workflows without spending three months in training. It’s ideal for creating proofs of concept (POCs) and quickly validating our ideas.

What about industrialization?

It’s true, production deployment on our self-hosted platforms is still an open question. But we’ve decided to put that on hold for now. Why? Because the focus of our first AI cycle was to test, test, and test some more.

We want to first find the right level of granularity for our agents and refine our prompts. In this fast-paced ecosystem, considering industrialization today, when better solutions might exist in six weeks, was premature. We validate the idea first, then we industrialize!

🗓️ And after a few weeks of use, where are we at?

After a few weeks of working with N8N, the results are very positive. We’ve confirmed that:

- Not having to code in an unfamiliar language, along with all the tools provided by N8N, allowed us to quickly create quite complex, powerful, and tested/evaluated workflows.

- The visual interface is a huge advantage for the Product team, who can track the progress of automations without having to delve into the code.

- The integration with our private AI environment works perfectly, keeping us within our budget (no tokens!) and our required level of security.

Choosing N8N has therefore validated our methodology: taking the time to clearly define the constraints, secure the infrastructure, and conduct rapid testing allowed us to select the tool best suited to our current situation.

III. How to Upskill as a Dev Team?

Okay, we’ve chosen our tools, but how do we ensure the whole team is proficient and can use them effectively without stress? We all agree: training takes time and practice, especially when it comes to AI!

Here’s how we organized our skills development by creating an optimal learning environment:

1. Safety First: A Sandbox for Safe Fun

When it comes to learning and experimentation, you need a playground where you can make mistakes without consequences. For us, this was an absolute necessity, especially with our ISO 27001 certification.

- The Infrastructure Gift: As we’ve seen, our Infrastructure team has provided us with a secure internal environment. It’s kind of our Yogosha “sandbox”!

- The Advantage? We can try connecting all our internal tools, test unusual configurations, and even make mistakes… without ever jeopardizing our production systems or customer data. It’s the perfect place to have fun safely!

2. Dedicate time to learning (and relaxing!)

The best training is the kind you have time to complete without the pressure of production. And for that, using the Shape Up methodology is fantastic; it provides a two-week cooldown period.

So we took advantage of the two weeks of cooldown preceding our first AI work cycle to get started and make it a pure learning time.

⇒ Zero Pressure: We were able to read articles, watch videos, and run our first tests without the Damocles’ sword of deliverables hanging over our heads. It was ideal for a smooth start!

3. Practice, practice, practice!

AI isn’t just theory. You have to get your hands dirty:

- Development and Experimentation Time: We clearly dedicated time to developers so they could experiment with the new technologies we had chosen. This is work time, not “extra” time! A full cycle (6 weeks) was dedicated to setting up Proofs of Concept (POCs).

- Pair Programming: The best way to learn quickly and effectively is to work in pairs. We’re all fully remote, no problem, a video call with screen sharing and we’re good to go! One person codes, the other observes and advises. This allows us to transmit knowledge super quickly, exchange viewpoints directly, and avoid getting stuck alone for hours.

Sharing and exchanging: Collective knowledge

Learning is a collective adventure! To ensure expertise spreads throughout the team and beyond, we’ve focused on continuous sharing:

- Tech Reviews (1h per week): To make sure our technical choices are sound and that we’re following best practices. It’s a critical and educational moment.

- Demos (as often as possible): Showing others what we’ve accomplished (or even our constructive failures!). Seeing the technology in action makes things much clearer for everyone!

- Geek Fridays (half-day per cool-down): This is our informal time to discuss our discoveries, our favorite tech, our readings… Perfect for sharing the most relevant articles and videos we’ve found on AI!

IV. Mastering costs during the POC phase

Innovation is great, but you have to avoid unnecessary expenses! And when it comes to AI, it’s easy to see the costs spiral out of control…

Infrastructure: Controlled Costs to Ensure Data Security

As we mentioned, we opted for a dedicated server with an H100 card. It’s the absolute best for security, but it’s a bit pricey: around €2,500/month. That’s the price of peace of mind for our confidential customer data.

But we found a way to reduce the bill during the testing phase:

- The “35-Hour” Server (or almost): Why leave a high-powered machine running at full capacity while the team sleeps? So we scheduled the server to be open during a defined time period: 12 hours a day, 5 days a week. The result: the bill dropped from €2,500 to €800/month. Massive savings without impacting our ability to work!

- N8N in “Budget” mode: For the automation tool, we started with the free version. Admittedly, we’re missing a few features (like workflow versioning), but it’s more than enough to validate our ideas without immediately reaching for the credit card.

Project Management: The “Shape Up” Method to the Rescue

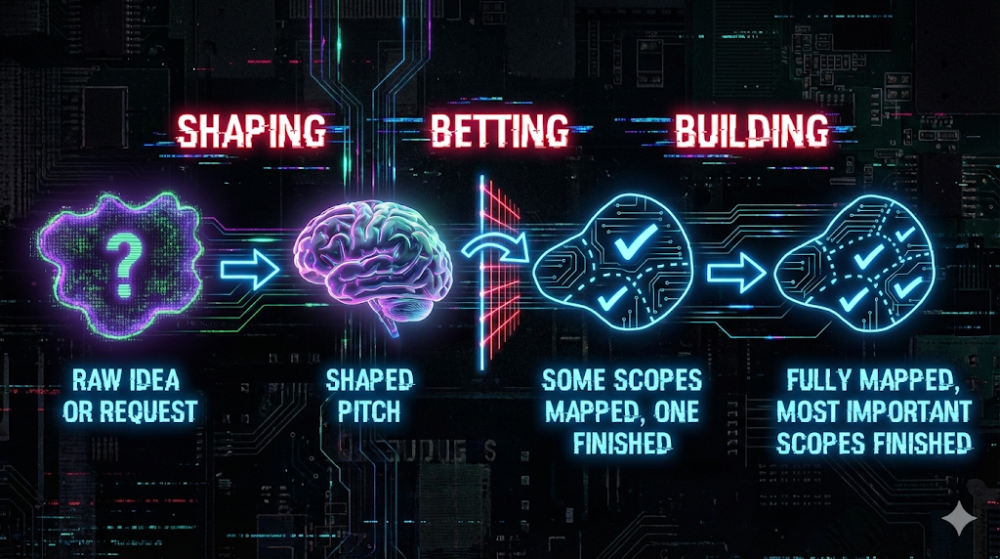

Beyond the technical aspects, it’s our way of working that protects us from projects that drag on forever. We use the Shape Up methodology.

The principle? We work in 6-week cycles.

- A strict framework: For this AI project, we gave ourselves 6 weeks to deliver results. Not a day more! No matter where we are at the end of the cycle, we stop and take stock. This avoids the “tunnel vision” effect where we spend money without seeing the end in sight.

- The “Betting Table” (The moment of truth): This is where everything is decided. At the end of the cycle, we bring together the stakeholders. We show them what we’ve done, the maturity of the project, and we discuss it.

- Do we continue or stop? It’s a collective decision. Either we decide to “put another coin in the machine” because the potential is there, or we prioritize another, more urgent project. We choose together, with full knowledge of the facts, whether the investment is still worthwhile.

In summary: Between our server’s office hours and the strict framework of our 6-week cycles, we managed to transform a complex and costly subject into a controlled and hyper-responsive experiment.

Closing Words: From Intuition to Mastered Reality

In three months, we moved from strategic reflection to an operational reality where our teams are “shipping” concrete agents. If we had to summarize what we learned by opening the hood, it’s that tech is nothing without method.

Choosing N8N wasn’t just a tool choice; it was a choice for agility. Building our own private infra wasn’t just for “geek” pleasure; it was to guarantee our clients’ security. And using Spikes and Shape Up was our safeguard against letting innovation turn into a financial black hole.

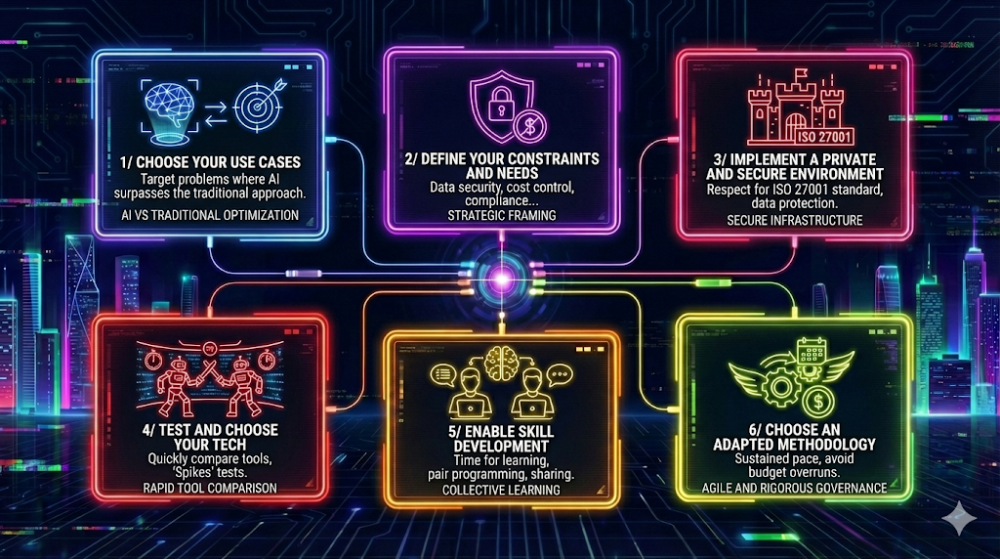

💡 Takeaways: 6 keys to an AI pivot

If you need to retain the core principles of our approach for your own organization, here are the key points:

- Choose use cases aligned with business reality

- Define constraints and needs before diving in

- Set up a secure environment (private whenever possible)

- Test and select your tech stack in 3 days

- Invest time in upskilling… at least 2 full weeks

- Adopt a methodology tailored to frugal experimentation

The momentum is there, the tools are in place, and the team has ramped up. But the journey doesn’t end with validating a proof of concept (POC). The next challenge? Transforming these trials into industrial-grade features at the heart of our platform!

👉 Check out our article AI: From POC to Feature — The Guide to Safe Industrialization

And you? How did you tackle these organizational and skills-building challenges? Between time management, technology selection, and cost control, what solutions did you test and approve within your teams?