Table of Contents

Your AI POC has launched, the lights are green, and internal excitement is at an all-time high. But beware of the mirage: this is where the most perilous phase begins.

For many organizations, the transition from prototype to production turns into a dead end. Navigating this shift successfully requires leaving the “sandbox” behind to build a solution that is reliable, scalable, and cyber-secure.

At Yogosha, Offensive Security Platform, we’ve navigated this cycle to bring our AI-assisted triage agent to life. We’ve learned that you must first know how to frame the unknown to extract value, before you can turn a trial run into a robust and compliant solution. How do you manage a POC without losing your way? How do you scale up without sacrificing security?

In this post, we’re taking you behind the scenes of our experience: how to manage experimentation with agility while industrializing and securing every step of the way.

I. From POC to Value: our insights on avoiding “scope creep”

At what point does a POC actually generate value? How do you master its timeline and scope? When is the right time to stop? And does value necessarily have to result in a final delivery? We’re sharing our experience to shed some light on these questions.

A POC is an adventure, but it comes with two major risks: “drifting” prematurely into production and over-investing in a proof of concept that has no concrete future. Here is how our team learned to navigate these waters and avoid these pitfalls.

How to frame the unknown in R&D?

POCs are synonymous with R&D and, by nature, with the unknown. How do you effectively wrap a framework around a subject that is still fuzzy?

We’ve learned that it is absolutely essential to define a clear objective, a precise Definition of Done, and—most importantly—non-objectives for every POC to establish a rigorous framework. This gives the team a constant point of reference and ensures we don’t veer off course. This is also why we find it vital to set a firm deadline for its completion.

Once the expectations are set, all that remains is to map out the path to get there.

Experimentation and the right to fail

Since a POC is, by definition, an experiment, we accepted that we shouldn’t try to define everything from the start. There are no rigid specifications or strict deadlines. Unlike a standard iterative development project, we gave ourselves permission to embrace mistakes, experiment, pivot, and build the path progressively.

This approach fosters creativity and requires more freedom to move fast. While it’s crucial not to set too many limits, it is equally important to remember that security remains non-negotiable—especially in a sector as sensitive as ours.

Because R&D and POCs are not everyday activities for every team, we found it valuable to lean on our existing methodology and proven processes (ShapeUp). We strive to maintain our established rituals, syncs, and organizational structure to avoid disrupting the collective workflow.

Key lessons: rapid confrontation and the role of Business Teams

Our main takeaway: it is essential to pit the POC against reality as quickly as possible and avoid over-iterating on prompts based on overly specific input data. We’ve seen how easy it is to spend hours tweaking a prompt to optimize a result, only to unconsciously bias it toward a specific expectation. Rapidly establishing evaluations allows you to test against a large, relevant volume of data, ensuring you validate your direction early on.

Working with Business teams (subject matter experts) presents a challenge due to their competing priorities and availability. A key lesson from our experience is to avoid integrating Business teams into every single stage of the POC. Even though they hold the necessary expertise, they are often overwhelmed by day-to-day operations (the RUN) and have limited bandwidth. We find it much more efficient to pull them in for specific topics with a clear, well-defined scope—maximizing their impact without burning them out.

Ultimately, the goal of a POC is not production delivery, but rather to confirm a project’s feasibility and its value to users. You can absolutely communicate the results of a POC without ever putting it into production: the learning itself is, in fact, business value.

Once the business value is validated and the experimental framework is under control, a crucial question arises: are we ready for the real world? While the POC allowed us to learn without risk, the “feature” is a promise made to our customers. We must leave the sandbox and enter the arena of industrialization.

II. Moving from POC to Feature: ensuring security at scale

Once the POC has proven its value, the excitement is palpable: we finally have our triage agent capable of detecting out-of-scope reports and duplicates. The temptation is to hit the “Prod” button immediately.

But this is exactly when you need to keep a cool head. Our mantra has been clear from the start: a POC is never pushed to production as-is. Why? Because a POC is an exploration, whereas a feature is a commitment to security and reliability for our customers. Here is how we structured this critical transition.

The Risk Assessment: the moment of truth

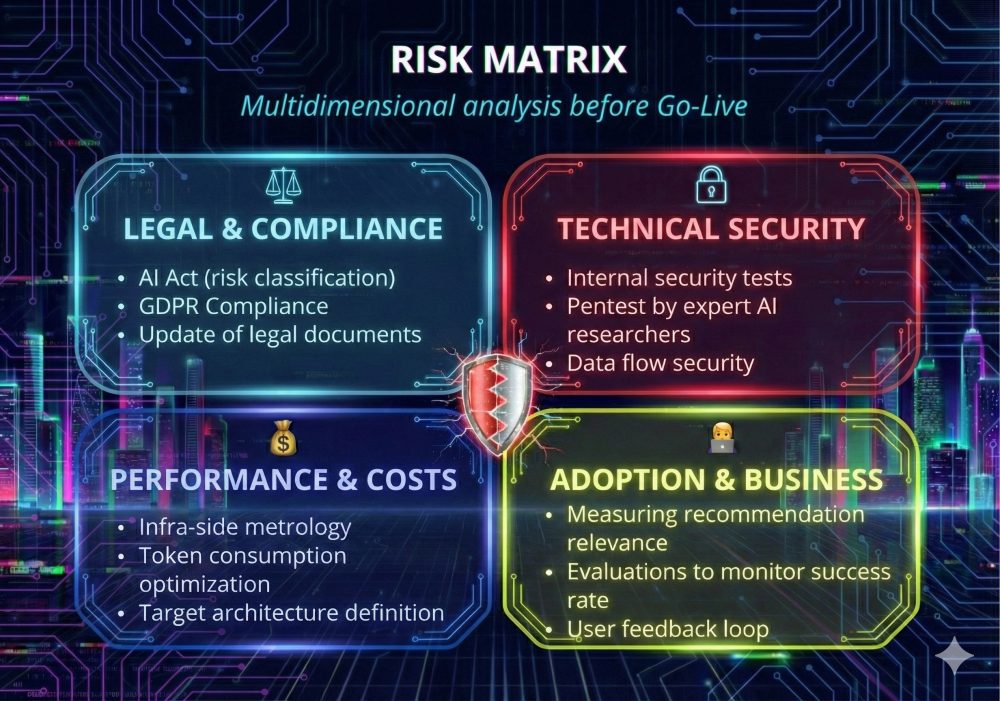

Before releasing the final version, we gathered for a deep-dive risk analysis—an exercise we perform for every new feature. The goal is simple: eliminate uncertainty to prevent the project from veering off track. Several risk categories emerged: legal, security, costs, usage, workflow reliability, and the relevance of AI recommendations.

De-risking the legal aspect

Addressing legal risks was our top priority to validate our scope of action. We focused on three pillars:

- Data: Where does it travel and how is it protected?

- AI Models: How reliable are they? Are they “black boxes”?

- Regulation: Are we aligned with the AI Act and GDPR?

One question sparked a lot of debate: encryption and SecNumCloud. Do we have the right not to be compliant? Do we want to be? Before deploying even a Beta version, it is paramount to offer total transparency to pilot customers regarding the model used and how their data is managed. A full update of our legal documentation is, of course, a prerequisite for deploying any AI feature on our platform.

💡The lawyers’ perspective: Securing the Legal Ground

What are the best practices for integrating an AI feature into your product?

Integrating AI must be managed through technical, legal, and regulatory lenses.

The primary objective is to secure the origin of AI and the data used. This involves strengthening contractual and legal protections to achieve regulatory compliance, particularly that imposed by the AI Act.

| Domain | Key Best Practices |

|---|---|

| Technical & Legal | AI Model: Clearly identify the model’s origin (proprietary vs. third-party). |

| Contractual | Licenses & Sub-licensing: Verify license conditions and ensure sub-licensing rights are correctly transferred and authorized. |

| Contractual | Contractual Framework: Systematize the integration of specific clauses governing the use of AI models in contracts. |

| Compliance & Legal | Regulation: Identify and ensure compliance with all applicable regulations (e.g., GDPR, AI Act). |

| Regulatory (AI Act) | Map AI systems: Determine if the AI System (AIS) falls into a specific category (notably “high risk”) of the AI Act and establish a compliance plan. |

| Operational | Limitations: Know the model’s scope of use and its potential limitations. |

| Suppliers | Supplier Questionnaire: Integrate specific questions about AI (security, compliance, standards, infrastructure, etc.) into pre-contractual questionnaires. |

| Data | Master datasets: Use strictly governed datasets, both internally and contractually, for training and inference. |

Securing the technical aspect

Operating in the cybersecurity sector, technical risk is a non-negotiable priority for us. This vigilance begins as early as the POC development phase: thanks to our teams’ expertise, we conducted an initial round of stress tests and security audits. In fact, this rigor allowed us to identify and patch a Prompt Injection vulnerability during the development stage.

True to our release processes, we leave nothing to chance: every major update is systematically subjected to a pentest before going live. For our AI triage agent, we decided to go even further by involving researchers specialized in AI security. Their mission: to push the model to its limits and guarantee an optimal level of protection.

Architecture: industrializing without losing agility

Once the risks are identified, the question of the technical stack and the balance between performance and cost arises. We addressed the following questions:

- Should we keep the framework and stack chosen for the POC? If so, how do we industrialize it and monitor the vulnerabilities of these tools?

- Do we want to version our prompts? Should they be updated live or follow a classic release cycle?

- Should we opt for an AI SaaS module or a self-hosted solution to meet various data hosting requirements?

- How can we optimize costs? What are the long-term maintenance and evolution costs?

Our infrastructure team conducted an internal study to define the target architecture and precisely estimate token consumption per report processed. Every option was weighed to ensure data security and sustainable maintenance over the long term.

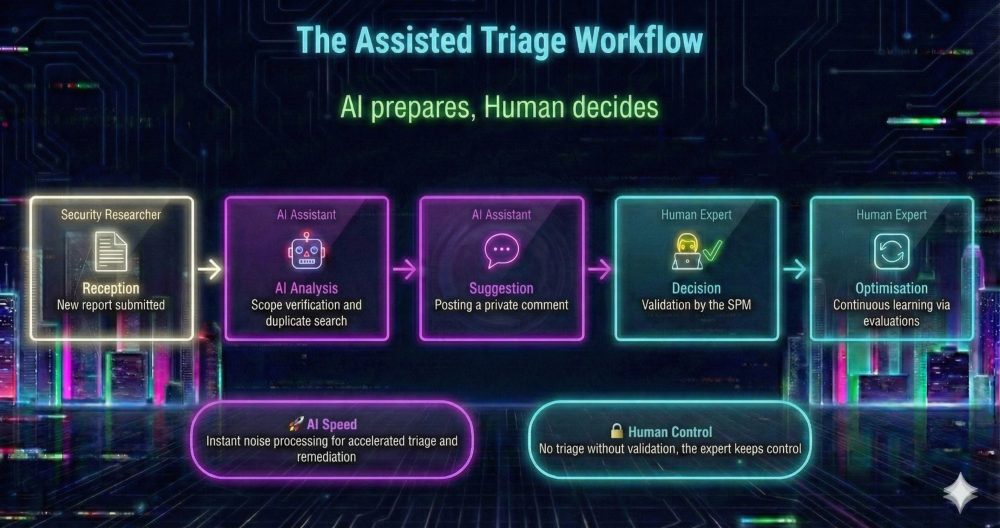

User experience: humans remain in control (Human-in-the-loop)

Integrating an AI service into a platform raises two major questions:

- How and where is the AI service configured?

- What triggers its action?

For the integration into the Yogosha platform, our philosophy was built on transparency and control. We designed the AI as an assistant that suggests actions but always requires human validation. Nothing happens “behind the back” of the user.

Our design principles:

- The “Opt-in” option: We believe it is essential for every organization to be able to activate or deactivate the AI service at any time. AI is never forced upon the user; it is offered as a decision-support tool. Why this choice? To respect user autonomy, comply with regulatory requirements, and build trust progressively.

- Automatic trigger with human validation: For triage, we linked the AI to the report reception flow. As soon as a vulnerability is submitted, the assistant gets to work to prepare the ground for the Security Program Manager. However, the final decision always remains human.

The user flow:

- Receipt of a new vulnerability report.

- Automatic AI analysis (if the service is enabled for the client’s asset).

- A private comment is posted on the report with the AI’s triage suggestion.

- The Security Program Manager triages the report (accepts or rejects).

- Comparison: The human triage result is compared with the AI suggestion for continuous improvement.

Test, Measure, Learn

A POC confirms viability; a Beta must confirm reliability. Before launching this stage, three prerequisites are essential:

- Test with real production data to measure workflow relevance. You need to know what you want to measure, on what data, and avoid biases in input data. For our assisted triage use case, we ran the workflow on a representative sample of more than 300 real reports from our Yogosha internal programs, already manually triaged and closed.

- Implement evaluations to monitor the workflow’s success rate. Implementing evaluation allows us to measure usage, relevance, reliability, and responsiveness of our workflow once it’s in users’ hands.

- Implement infrastructure metrology to measure the number of tokens used during queries to optimize AI usage costs and monitor machine performance.

Finally, our advice is not to open the floodgates all at once. Opt for a gradual rollout. We started with targeted POCs with partner clients. This provided us with irreplaceable business feedback: no matter how mathematically high-performing an AI is, only real-world usage confirms its true utility.

Conclusion: industrialization, a mastered strategic shift

Moving from a POC to production is not just a technical formality; it is a strategic shift. For AI to deliver sustainable value, it must leave the laboratory and enter a rigorous framework where security, legal compliance, and user experience converge.

Our journey with the triage agent has solidified one core conviction: AI should not be an automated “black box,” but a transparent assistant under human control. By integrating legal requirements from the design phase and subjecting our models to intensive security testing, we have transformed a promising experiment into a trusted tool.

AI industrialization is a continuous cycle. By keeping humans in the driver’s seat and moving forward through measurable stages, you are doing more than just following a trend: you are building robust innovation capable of withstanding real-world challenges.

The success of your next AI project will not depend on the complexity of your algorithms, but on the strength of the structure you build around them.

Are you ready to take the leap into industrialization?

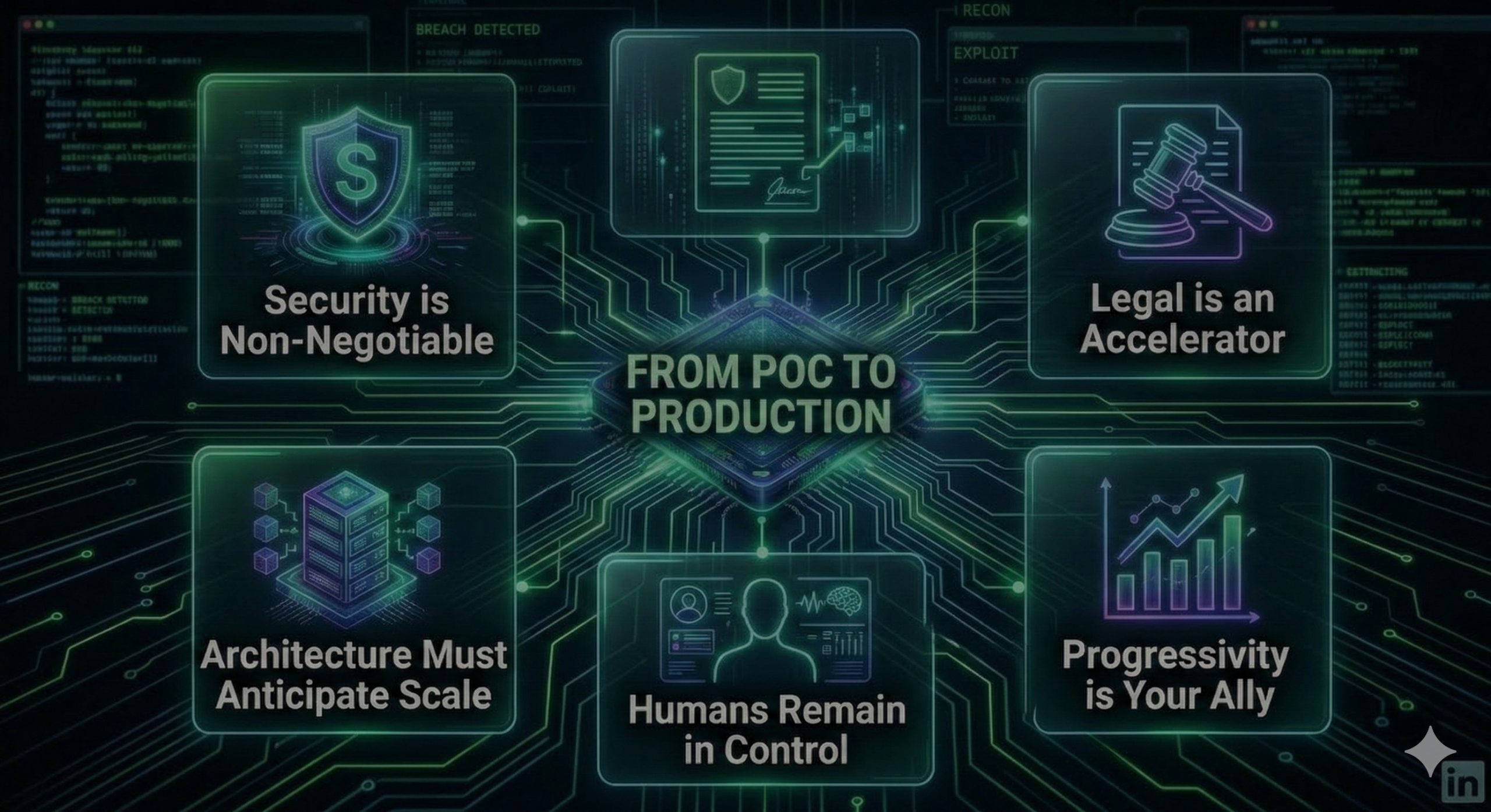

💡 Takeaways: 5 keys to launching your AI agent in production

- Security is non-negotiable: In our industry, a single vulnerability can have critical consequences. The “move fast and break things” approach has no place here.

- Legal is an accelerator, not a hurdle: By involving our legal counsel from the very beginning, we avoided last-minute roadblocks. Compliance (GDPR, AI Act) is a design asset, not an obstacle.

- Architecture must anticipate scale: Our infrastructure choices allowed us to control costs from the start. Moving to production was a matter of adjusting power, not a complete redesign.

- Humans stay in control: Designing the AI as an assistant rather than an automaton was decisive. Our users trust the system because they remain in control.

- Gradual rollout is your best ally: Deploying in successive phases allowed us to correct biases that would have been problematic at scale.

To learn more about how to launch your AI initiative; how to choose your use cases, technologies, and tools; and build skills, we invite you to browse our other articles here:

AI Transformation in 3 Months: How to Create Momentum with the WWWH Approach

AI pivot, under the hood: use cases, tools & budget

AI and vulnerability triage: lessons learned from our automated assistance POC

Understanding and anticipating the impact of AI-based offensive tools in the field of cybersecurity